The measurement gap

In May 2025, IBM published its global CEO Study, surveying 2,000 CEOs across 33 countries and 24 industries. The headline: only 25% of AI initiatives have delivered their expected ROI. Just 16% have scaled enterprise-wide. A Summer 2025 MIT report went even further, estimating that 95% of generative AI pilots are failing outright.

These aren't early-stage growing pains. Enterprise AI has been scaling for over two years. The investments are real. The measurable results, largely, aren't.

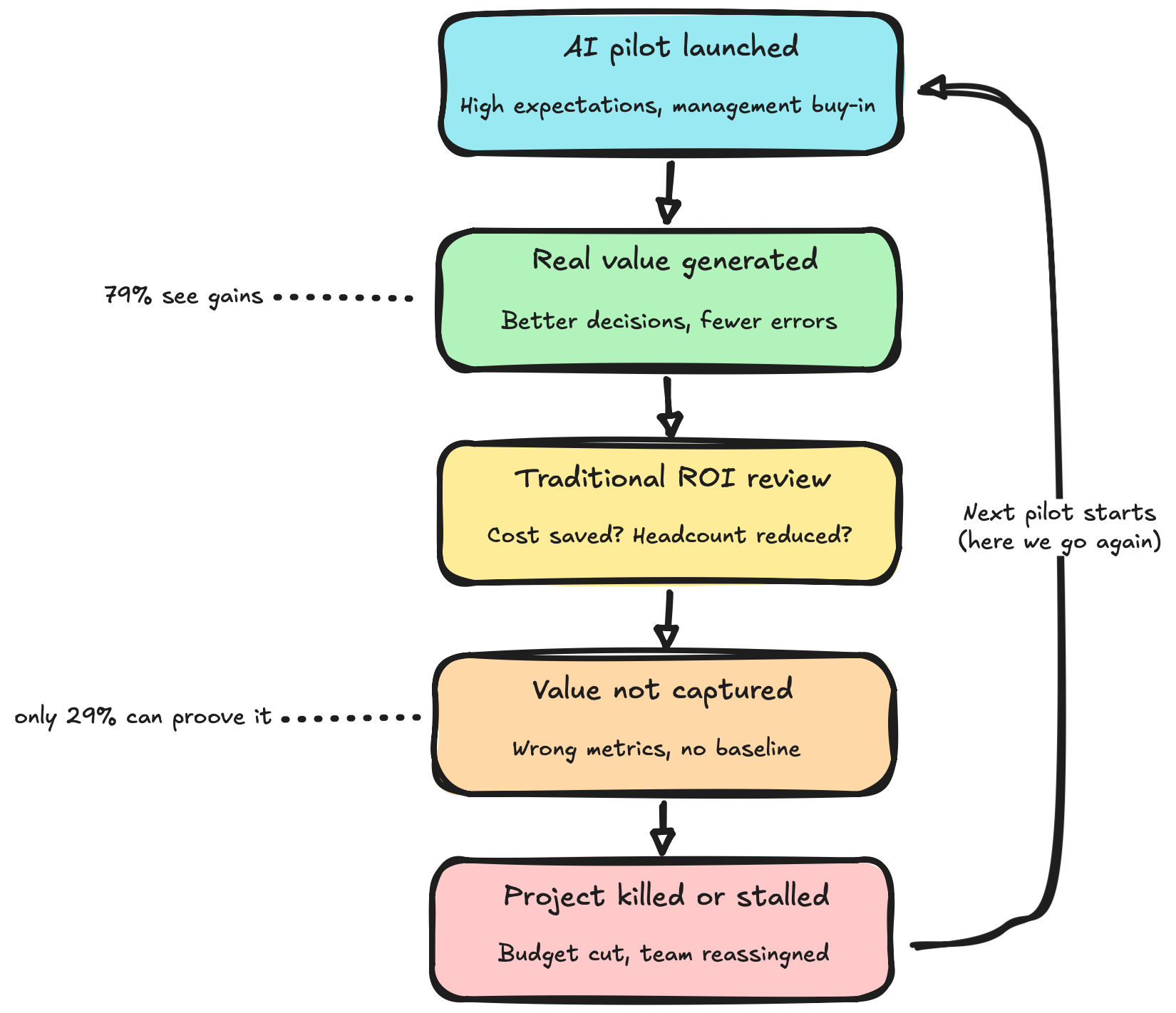

But here's where it gets interesting. IBM's Think Circle report from Q4 2025 found that 79% of executives report seeing productivity gains from AI. At the same time, only 29% say they can measure ROI confidently. That's not a performance gap. That's a measurement gap. AI is working. We just can't prove it because we're measuring it wrong.

Why traditional ROI fails for AI

Traditional IT investments are relatively straightforward to evaluate. Buy a server, run a workload, measure the throughput per dollar. Deploy a CRM, count the pipeline it generates. The inputs and outputs are clean, attributable, and immediate.

AI doesn't work that way. Its value profile is fundamentally different, and applying the same ROI logic produces misleading results.

Value compounds over time. An AI model that assists with customer service gets better as it processes more interactions. A coding assistant reduces onboarding time for every new engineer who joins. The ROI in month one is a fraction of the ROI in month twelve, yet most business cases are not built on long term projections.

Benefits are often intangible. Better decisions. Fewer errors. Faster learning curves. Reduced cognitive load. Improved compliance. These are real outcomes, but they don't appear on a traditional balance sheet.

When a CFO asks "what did we save?", the honest answer is often: we made 500 people 15% more effective at things that are hard to quantify.

Value appears in unexpected places. An organisation deploys AI for document summarization. The intended benefit is time savings. The actual benefit turns out to be better cross-departmental knowledge transfer and faster decision-making in leadership meetings. Nobody planned to measure that.

The counterfactual is invisible. How do you measure the deal you didn't lose because your proposal was more tailored? The compliance breach that didn't happen because an AI flagged an anomaly? The employee who didn't quit because their daily work became less tedious? Traditional ROI requires a clean before-and-after. AI benefits often live in the "what didn't go wrong" category.

The consequence? Organisations that evaluate AI purely through traditional metrics will systematically undervalue it, and either kill promising initiatives or keep investing without any framework to optimize where the money goes.

The pilot purgatory problem

The measurement gap has a very concrete downstream effect: pilot purgatory.

S&P Global data shows that 42% of companies abandoned most of their AI projects in 2025, up from just 17% the year prior. The top reasons: total cost and unclear value. Not "it didn't work." Not "the technology failed." But "we couldn't prove it was worth it."

Meanwhile, Deloitte's global survey found that while 85% of organisations increased AI investment in the past year and 91% plan to increase again, only 6% achieved payback in under a year. Most reported a two-to-four-year timeline, far longer than the seven-to-twelve-month window typically expected for standard IT investments.

This creates a toxic dynamic. Leadership expects fast, visible returns. The measurement framework captures a fraction of the actual value. Projects delivering real impact get cut because the impact doesn't show up in the metrics being tracked. The organisation concludes "AI doesn't deliver ROI" when the real conclusion should be "we don't know how to measure AI ROI."

The pressure is intensifying. KPMG research shows that for 90% of organisations, investor pressure to demonstrate AI ROI is now considered important or very important, up sharply from 68% just one quarter earlier. CFOs need to connect AI spending to measurable business outcomes, forecast returns, and identify waste before it costs millions. Without a robust measurement approach, they're flying blind.

As IBM's June 2025 research put it: projects that initially showed spectacular pilot ROI saw those returns moderate at production scale, settling around 7%, below the typical 10% cost-of-capital hurdle rate. However, the top decile of organisations achieved approximately 18%, well above it. The difference was not better models. It was better measurement and deeper organisational integration.

Frameworks that exist but aren't widely adopted

The good news: the thinking on this problem is maturing. Several credible frameworks now exist for measuring AI value beyond traditional cost-benefit analysis.

Microsoft's Agentic AI ROI Framework

Published in February 2025 on the Azure AI Foundry Blog, Microsoft proposed a structured approach specifically for agentic AI applications. The framework follows five steps: define objectives and KPIs tied to business outcomes, establish baselines before deployment, track tangible returns (cost reduction, throughput, error rates), track intangible returns (satisfaction, decision quality, knowledge retention), and iterate as the technology evolves.

Microsoft's key insight: the types of gains and costs differ for every use case. There is no universal AI ROI formula. Each scenario requires its own metric mapping, which is exactly why most organisations struggle. They try to apply one generic template across fundamentally different value types.

IBM's Three-Horizon ROI Model

IBM's "From AI Projects to Profits" research introduces a distinction between short-term efficiency ROI and long-term transformation ROI. The critical finding: organisations that moved AI from peripheral automation into core business processes saw sustainable, compounding returns, but it took longer and required more coordination. The top performers didn't just deploy AI. They redesigned workflows around it.

IBM also highlights a shift in how leading organisations think about measurement: from project-based ROI calculations to bottom-line impact delivered to shareholders. The question isn't "did this project pay for itself?" It's "is AI measurably improving our operating profit?"

ISACA's Three-Category ROI Model

ISACA proposes evaluating AI ROI across three distinct categories:

- Financial ROI (revenue, cost reduction, productivity savings)

- Operational ROI (process improvements, quality, risk reduction)

- Capability ROI (workforce skill development, innovation culture, organisational learning)

This third layer is what most frameworks miss entirely. A team that learns to work effectively with AI isn't just more productive today. It's building capability that compounds across every future initiative. That's arguably the highest-value return of all, and almost no one tracks it.

What practitioners can do now

Based on the research, a few principles emerge for anyone trying to get AI ROI measurement right:

Measure before you deploy. Establish clear baselines for the processes AI will touch. Without a "before" picture, you cannot credibly demonstrate an "after." This is the step most organisations skip, and the one they regret most.

Expand the definition of value. If your business case only tracks cost reduction, you're capturing maybe 30% of the actual benefit. Include operational improvements, quality metrics, employee experience, decision velocity, and capability development.

Match the timeline to the value type. Efficiency gains show up in weeks. Process transformation shows up in quarters. Capability building shows up in years. One ROI review at month three will systematically undervalue the latter two.

Separate "we can't measure it" from "it's not working." These are fundamentally different problems. The first requires better instrumentation. The second requires a different strategy. Conflating them kills good projects and funds bad ones.

Report honestly. The temptation is to either oversell AI results by cherry-picking the best demos, or undersell them by only counting hard cost savings. Neither serves the organisation. The goal is an accurate picture of value delivered across all dimensions: financial, operational, and capability. As IBM's research noted, only 6% of organisations still pursue AI in an ad hoc fashion, down from 19% a year earlier. The shift toward strategic, measured implementation is happening. The question is whether your measurement approach is keeping up.

The bigger picture

We're in a peculiar moment. Organisations are investing at unprecedented scale in a technology they overwhelmingly believe is transformative, while simultaneously lacking the frameworks to evaluate whether their specific investments are delivering.

This isn't sustainable. CFOs will demand proof. Boards will demand accountability. And organisations that can't demonstrate returns will pull back, not because AI failed, but because their measurement approach did.

The irony is that the frameworks exist. Microsoft, IBM, ISACA, and others have published credible approaches. The gap isn't intellectual. It's operational. Most organisations haven't yet invested in the measurement infrastructure that makes AI accountability possible.

For anyone leading AI initiatives today, this might be the highest-leverage problem to solve. Not which model to use or which use case to pursue, but how to build an honest, comprehensive system for tracking whether any of this is actually working.

Nvidia CEO Jensen Huang compared forcing engineers to justify AI with hard ROI upfront to asking a child to make a business plan for a hobby. There's wisdom in that. Experimentation needs room to breathe. But there's a difference between giving experimentation room and having no measurement at all. The organisations that will win this cycle are the ones that find the balance: enough patience to let AI value compound, enough rigour to prove that it is.

Because $644 billion deserves better than anecdotes and assumptions.